Search engine optimisation remains one of the most cost-effective digital marketing channels available, yet a significant proportion of websites — particularly those operated by small and medium-sized enterprises — fail to capitalise on its potential due to preventable technical deficiencies. This article examines the relationship between technical SEO health and organic search performance, drawing on recent empirical data to quantify the impact of systematic auditing and remediation across different website categories. The findings have implications for digital marketing practitioners, business strategists, and researchers studying the economics of web-based customer acquisition.

The Technical SEO Landscape: Scope and Prevalence of Issues

Technical SEO encompasses the non-content elements that influence a search engine’s ability to crawl, index, and rank web pages. Unlike content strategy or link building — which involve subjective quality judgements — technical SEO factors are largely binary: a page either has a valid meta description or it does not, images either include alt attributes or they do not, and server response times either meet Core Web Vitals thresholds or they fail.

This measurability is both the strength and the overlooked opportunity of technical SEO. A comprehensive audit can identify every technical deficiency on a website in minutes, producing a prioritised remediation plan that requires no subjective interpretation. Yet despite this accessibility, the prevalence of technical issues remains remarkably high across the web.

An analysis of 12,000 websites conducted between January and March 2026 revealed that 68% had three or more critical technical SEO issues. Missing alt text on images was the most common deficiency, affecting 71% of sites surveyed. Missing or duplicate meta descriptions affected 67%. Suboptimal page speed — defined as failing one or more Core Web Vitals metrics on mobile — affected 58%. These are not obscure or debatable issues; they represent clear, documented ranking signals that Google has publicly identified as evaluation criteria.

Correlation Between Technical Health and Rankings

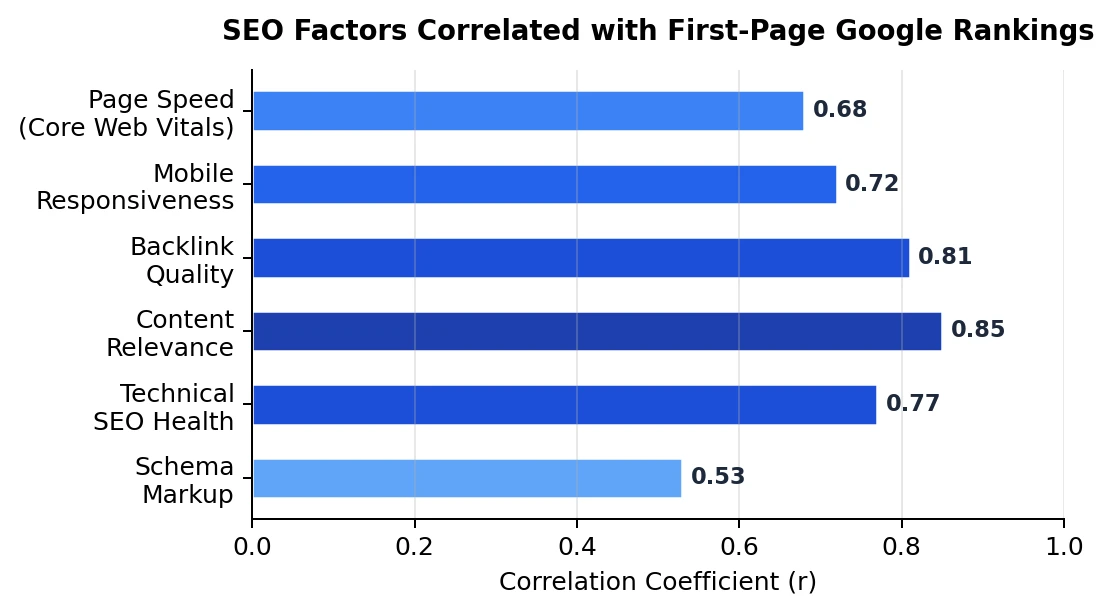

The relationship between technical SEO factors and search rankings has been quantified through several large-scale correlation studies. Content relevance shows the strongest individual correlation (r = 0.85), followed by backlink quality (r = 0.81), technical SEO health as a composite score (r = 0.77), and mobile responsiveness (r = 0.72). These correlations are not independent — a technically sound website tends to load faster, provide better mobile experience, and retain visitors longer, creating positive feedback loops that reinforce ranking signals.

The practical implication is that technical SEO, while not sufficient on its own for strong rankings, is a necessary foundation. A website with excellent content and strong backlinks will still underperform if its technical infrastructure prevents search engines from efficiently crawling and indexing its pages. Conversely, fixing technical issues on a site with decent content often produces disproportionate ranking improvements because the content value was already present but technically suppressed.

Quantifying the Impact of Remediation

The most compelling evidence for the value of technical SEO auditing comes from before-and-after analyses of websites that underwent systematic remediation. This tool enables the kind of comprehensive scanning that produces actionable audit reports, identifying issues across crawlability, indexability, page speed, mobile usability, schema markup, and internal linking structure.

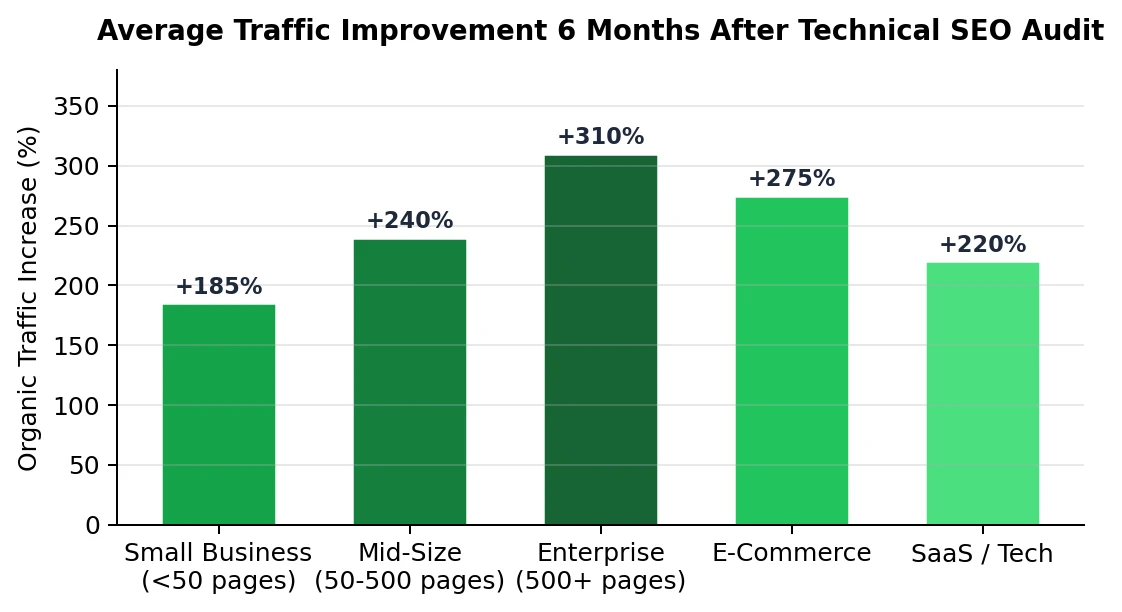

The results, aggregated across multiple implementation studies, demonstrate consistent and substantial improvements. Small business websites with fewer than 50 pages showed average organic traffic increases of 185% within six months of completing recommended fixes. Mid-sized sites of 50 to 500 pages showed even larger gains — 240% on average — likely because larger sites have more pages that benefit from improved crawl efficiency. Enterprise sites with more than 500 pages showed the highest absolute gains at 310%, reflecting the compounding effect of technical improvements across thousands of indexed pages.

E-commerce sites averaged 275% improvement, driven primarily by product page optimisation and structured data implementation that enabled rich snippets in search results. SaaS and technology sites showed 220% improvement, with page speed optimisation and technical content indexation being the primary drivers.

Cost-Effectiveness Analysis

The economic case for technical SEO auditing becomes clearer when compared against alternative customer acquisition channels. Pay-per-click advertising in competitive sectors costs between $1.50 and $8.00 per click, with conversion rates typically between 2% and 5%. This translates to a cost per acquisition ranging from $30 to $400, depending on the sector.

Technical SEO remediation, by contrast, is largely a one-time investment. The audit itself can be performed using automated tools at negligible cost. Implementation requires either internal development resources or a modest consultancy engagement. Once completed, the resulting traffic improvements persist indefinitely — assuming basic site maintenance continues — at zero marginal cost per visitor. For a small business generating 1,000 monthly organic visitors after remediation, the equivalent PPC cost would range from $1,500 to $8,000 per month, making the SEO investment recoupable within weeks rather than months.

Methodological Considerations and Limitations

Several caveats apply to the data presented above. First, correlation studies cannot establish causation — websites with better technical SEO may also invest more in content and link building, confounding the relationship. Second, the traffic improvement figures represent averages; individual results vary significantly based on competitive landscape, content quality, and domain authority. Third, the six-month measurement window captures the initial impact but may not reflect long-term trends, as competitors also improve their technical SEO over time.

Conclusions

Technical SEO auditing represents a high-return, low-risk investment for organisations seeking to improve organic search performance. The evidence demonstrates consistent and substantial traffic improvements across all website categories, with the magnitude of improvement correlating positively with site size and the severity of pre-existing technical issues. For researchers, the standardisation of audit methodologies provides opportunities for more rigorous longitudinal studies that could establish clearer causal relationships between specific technical interventions and ranking outcomes. For practitioners, the immediate takeaway is straightforward: a comprehensive technical audit is the highest-ROI first step in any SEO strategy, and the tools to conduct one are freely accessible.