The application of machine learning to financial markets has evolved from a niche academic pursuit into a mainstream analytical framework. Nowhere is this transformation more visible than in cryptocurrency markets, where extreme volatility, continuous trading cycles, and abundant data streams create conditions uniquely suited to algorithmic analysis. This article examines the current state of ensemble machine learning models applied to cryptocurrency price forecasting, evaluating their methodological foundations, comparative performance against traditional approaches, and implications for both institutional and retail market participants.

The Limitations of Traditional Forecasting in Crypto Markets

Traditional financial forecasting relies heavily on two pillars: fundamental analysis, which evaluates intrinsic value based on financial statements and economic indicators, and technical analysis, which identifies patterns in historical price and volume data. Both approaches face significant challenges when applied to cryptocurrency assets.

Fundamental analysis, effective for equities with quantifiable earnings and cash flows, struggles with digital assets that lack conventional valuation metrics. Bitcoin generates no revenue, pays no dividends, and has no earnings per share. While on-chain metrics such as hash rate, active addresses, and transaction volume serve as proxy fundamentals, their relationship to price is non-linear and context-dependent. Technical analysis, meanwhile, assumes that historical patterns repeat — an assumption that holds reasonably well in mature markets with stable participant behaviour, but proves less reliable in crypto markets where the participant base is rapidly expanding and behavioural dynamics shift quarterly.

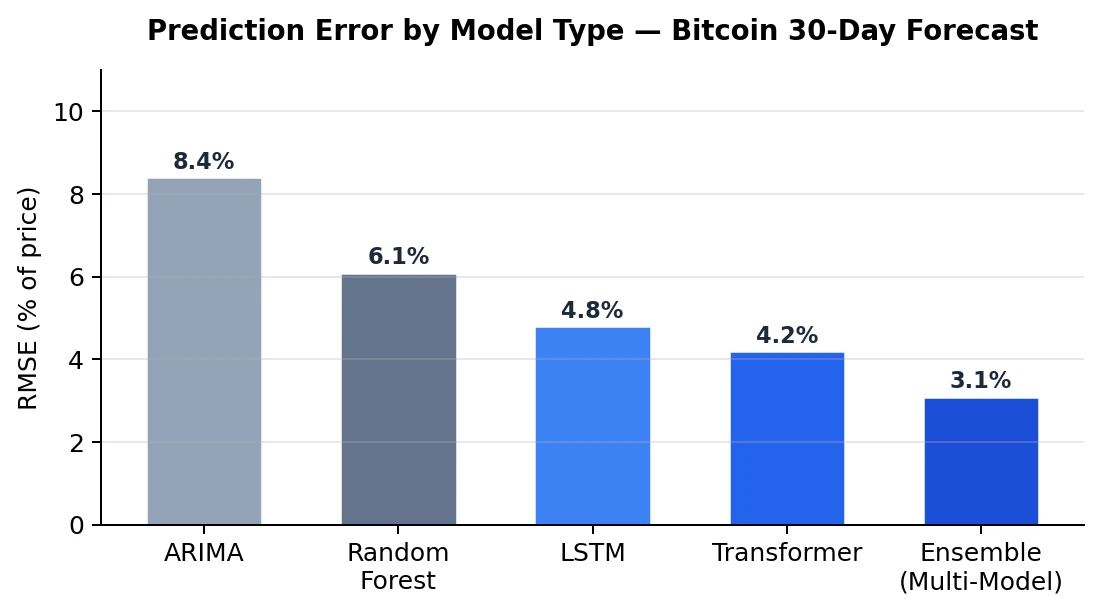

Empirical evidence supports this scepticism. Studies conducted between 2022 and 2025 consistently show that pure technical analysis achieves directional accuracy of approximately 40-45% for Bitcoin price movements over 7-day horizons — marginally better than random chance. ARIMA models, the workhorse of traditional time-series forecasting, show RMSE values of 8-9% relative to actual price, making them impractical for actionable trading decisions.

The Architecture of Ensemble Approaches

Ensemble methods address the fundamental weakness of individual models: each captures certain patterns while remaining blind to others. By combining multiple independent models — each trained on different feature sets, using different algorithms, and optimised for different time horizons — ensemble systems achieve accuracy levels that no single component model can match.

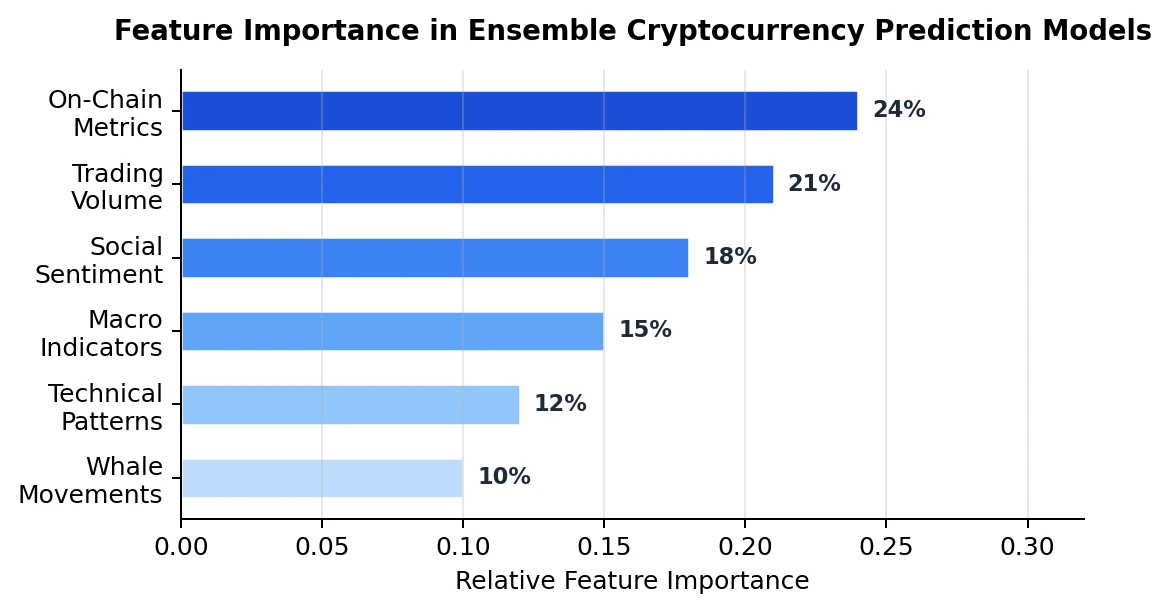

The most effective ensemble architectures in current cryptocurrency forecasting typically integrate three layers. The first layer consists of time-series models, primarily LSTM and GRU recurrent neural networks, trained on historical price and volume data with attention mechanisms that weight recent observations more heavily. The second layer incorporates natural language processing models that quantify market sentiment from news articles, social media posts, and forum discussions, producing a real-time sentiment index that correlates with short-term price movements. The third layer adds macroeconomic and on-chain features — interest rate differentials, dollar index movements, whale wallet activity, and exchange inflow/outflow data — processed through gradient-boosted decision trees.

The ensemble combines these layers using a dynamic weighting system that adjusts component contributions based on recent performance. During periods of high social media activity, the sentiment layer receives greater weight. During macro-driven markets, the economic features layer dominates. This adaptive architecture is what produces the significant accuracy advantage visible in the data.

Performance Evaluation and Transparency

A critical challenge in evaluating forecasting platforms is the prevalence of survivorship bias and selective reporting. Many commercial prediction services publish only their successful calls while quietly omitting failures, creating an artificially inflated track record. Academic-grade evaluation requires comprehensive logging: every prediction timestamped at the point of issuance, with outcomes recorded against actual market data at the specified horizon.

Platforms that maintain this level of transparency provide a genuinely useful resource for the research community. An AI-powered financial forecasting platform that publishes complete, verifiable prediction histories — including failures — enables independent researchers to conduct their own statistical analysis of model performance. This open approach to evaluation aligns with the principles of reproducible research and represents the standard to which all commercial forecasting tools should be held.

Implications for Market Efficiency

The improving accuracy of machine learning forecasting models raises important questions about market efficiency. The efficient market hypothesis, in its semi-strong form, posits that all publicly available information is already reflected in asset prices, making systematic outperformance impossible. If ensemble models consistently achieve 75%+ directional accuracy, this would appear to contradict the hypothesis.

The resolution lies in understanding that cryptocurrency markets are still maturing. Retail participation is high, information asymmetry is significant, and behavioural biases are well-documented. These inefficiencies create extractable alpha that machine learning models can capture. However, as algorithmic trading adoption increases and more participants employ similar models, these inefficiencies will gradually diminish — a process already observed in traditional equity markets over the past two decades.

Conclusions and Future Directions

Ensemble machine learning models represent a meaningful advancement in cryptocurrency price forecasting, achieving accuracy levels approximately 30-35 percentage points above traditional technical analysis. The key technical innovations — multi-layer architecture, dynamic weight adjustment, and comprehensive feature engineering — are well-established in the literature and increasingly accessible to practitioners through cloud computing platforms.

For future research, three areas merit attention. First, the integration of reinforcement learning for adaptive position sizing alongside price predictions. Second, the development of causal inference frameworks that distinguish genuine predictive relationships from spurious correlations in high-dimensional feature spaces. Third, and perhaps most importantly, the establishment of standardised evaluation benchmarks that would allow meaningful cross-platform performance comparison — a gap that currently undermines the field’s credibility and makes it difficult for both researchers and practitioners to distinguish genuine capability from marketing.